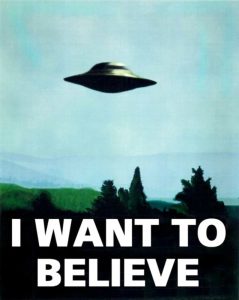

Modern digital methods of communication have become highly diverse, paradoxically making the exchange of information both easier and more complex. Critically, there is no longer a single canonical format for conveying written information or engaging in direct spoken communication. Handwritten or standard typeface letters have been replaced by instant, informal streams of data; often broadcast not to a single recipient but to multiple family members simultaneously, groups of friends, or simply any of the billions of people out there. Fonts, colours, layout and accompanying images can all carry meta-linguistic connotations, altering how words are perceived and understood. Choice and style are much more pervasive. Phones are no longer limited to simple speech and inhibited by physical wires; instead, they are mobile, multisensory devices with integrated cameras and a range of sensors generating constant data about the user, details of which can be automatically incorporated as an additional communication channel. Semiotics has become much more explicit, exaggerated and directly visual in nature. Accessible technology is now a global reality that transcends cultural boundaries. Semantics and context have to be established in 140 characters or less. All these fast-moving changes permit a rich and exponentially growing complexity in human interaction. However, this rapid shift in communication behaviour, both in terms of production and comprehension, means there is still little understanding of how the different modalities interact to deliver an intended, or unintended, message. This is particularly true when information has to be evaluated in terms of unknown or ambiguous provenance and authenticity. In other words, do you believe it? Do you trust it? Do you agree with it? What combination of factors will make you re-read, re-post, re-Tweet, or even just reply/comment rather than ignore? The Art of Persuasion has become a highly scientific pursuit with significant social and political impact. Why are we apparently living in a post-fact world? Is this trend just a natural and inevitable consequence of current attitudes and beliefs? Not only are some facts more equal than others, but some may not be facts at all. Robert Proctor (Stanford University) has spent many years investigating the spread of ignorance and created the wonderful word “agnotology”. This is typically defined along the lines of “culturally constructed ignorance, purposefully created by special interest groups working hard to create confusion and suppress the truth”. Of course this term in itself could be construed to have a certain negative sentiment and over-critical discourse bias. Fortunately, the subtitle of his co-edited book on the matter does emphasis the unmaking as well as making of ignorance, but the technologies and tools to make or break agnotology have already changed dramatically in the few years since it was published.

Modern digital methods of communication have become highly diverse, paradoxically making the exchange of information both easier and more complex. Critically, there is no longer a single canonical format for conveying written information or engaging in direct spoken communication. Handwritten or standard typeface letters have been replaced by instant, informal streams of data; often broadcast not to a single recipient but to multiple family members simultaneously, groups of friends, or simply any of the billions of people out there. Fonts, colours, layout and accompanying images can all carry meta-linguistic connotations, altering how words are perceived and understood. Choice and style are much more pervasive. Phones are no longer limited to simple speech and inhibited by physical wires; instead, they are mobile, multisensory devices with integrated cameras and a range of sensors generating constant data about the user, details of which can be automatically incorporated as an additional communication channel. Semiotics has become much more explicit, exaggerated and directly visual in nature. Accessible technology is now a global reality that transcends cultural boundaries. Semantics and context have to be established in 140 characters or less. All these fast-moving changes permit a rich and exponentially growing complexity in human interaction. However, this rapid shift in communication behaviour, both in terms of production and comprehension, means there is still little understanding of how the different modalities interact to deliver an intended, or unintended, message. This is particularly true when information has to be evaluated in terms of unknown or ambiguous provenance and authenticity. In other words, do you believe it? Do you trust it? Do you agree with it? What combination of factors will make you re-read, re-post, re-Tweet, or even just reply/comment rather than ignore? The Art of Persuasion has become a highly scientific pursuit with significant social and political impact. Why are we apparently living in a post-fact world? Is this trend just a natural and inevitable consequence of current attitudes and beliefs? Not only are some facts more equal than others, but some may not be facts at all. Robert Proctor (Stanford University) has spent many years investigating the spread of ignorance and created the wonderful word “agnotology”. This is typically defined along the lines of “culturally constructed ignorance, purposefully created by special interest groups working hard to create confusion and suppress the truth”. Of course this term in itself could be construed to have a certain negative sentiment and over-critical discourse bias. Fortunately, the subtitle of his co-edited book on the matter does emphasis the unmaking as well as making of ignorance, but the technologies and tools to make or break agnotology have already changed dramatically in the few years since it was published.

Investigating the process of how trust is established, examining the reliability and uptake of information, and determining the level of data quality is (I considered “lies”) at the heart of our neuropolitics research. This covers more implicit and subtle manipulations as well as direct, explicit presentation. Faith in evidence is required, even if faith is not commonly associated with science. Similarly, the change in the types of data being analysed means that new techniques have to be developed, applied and constantly reviewed, requiring a fully agile approach. Testing and validating a methodology is more important than any single dataset. Outside of academia, data journalism has emerged as a term associated with objective and unbiased data transparency. This includes a global growth in fact-checking websites, with examples such as fullfact.org in the UK. Whether this represents a return swing away from current anti-expert attitudes to a more evidence-based approach remains to be seen.

[Proctor, R., & Schiebinger, L. L. (Eds.). (2008). Agnotology: The making and unmaking of ignorance. Stanford University Press.]